This is the seventh in a series of posts on the Sony a7RIII (and a7RII, for comparison) spatial processing that is invoked when you use a shutter speed of longer than 3.2 seconds. The series starts here.

Up to now, my tests for the presence or absence of the “star-eating” Sony spatial filtering algorithm have been indirect, either using Fourier analysis or histogram plotting of dark-frame read noise to detect the presence or absence of the spatial filtering. I decided to try something more direct.

I reasoned that there are probably hot pixels in the dark-frame images that, by virtue of their great intensity and their lack of nearby bright pixels, could be considered to be virtually the same thing as one-pixel stars in actual night sky photographs. I wrote some code to analyze dark frame images, looking for pixels that were simultaneously

- a certain number of standard deviations above the mean

- having either no similarly-bright pixels as neighbors or being a certain amount over the mean of the eight neighboring pixels

As criteria for that last test, I set a parameter called starNeighborThreshold, and said that the bright pixel needed to be starNeighborTheshold times its brightest neighbor or starNeighborTheshold times the average of its neighbors. I called the number of standard deviations above the mean that were necessary for stardom (sorry, I couldn’t resist) outlierThreshold.

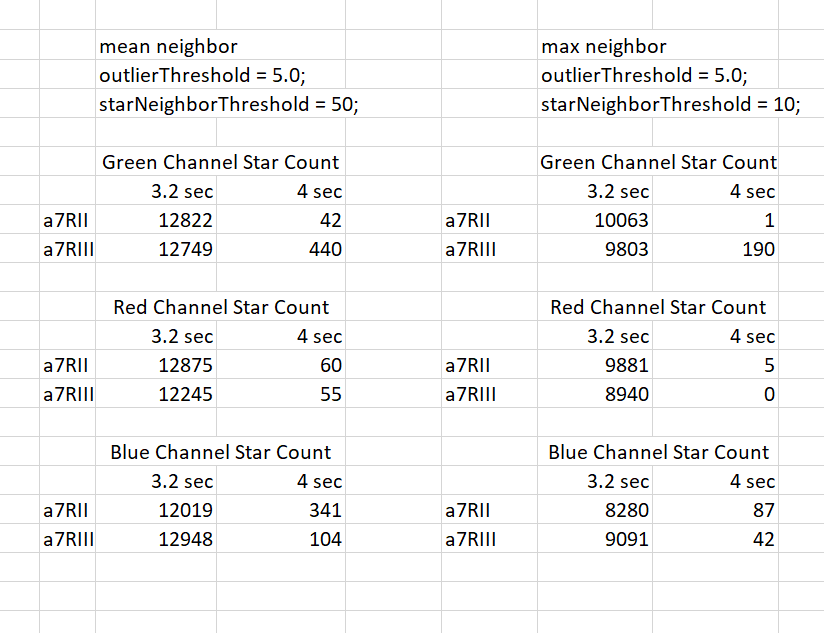

I ran the code against the three raw channels of the 3.2 second and 4-second exposures from the a7RII and a7RIII. Here’s how many stars I got:

You can see that, on the whole, both the a7RII and a7RIII at 4 seconds have a ravenous appetite for what I defined to be stars. However, as was predicted by Mark in a comment to an earlier post on this subject, in the green channel, the a7RIII is not quite as hungry.

Is this a fluke? I dunno. More to come.

Jim, your analysis is very similar to the approach applied in the star stacking freeware, DeepSkyStacker. I’ve used it to test two sets of ten images of the Milky Way shot a few minutes apart at a NZ site with minimal light pollution with a ZM distagon 35 on my A7RII at ISO 6400 . I shot one set at 3.2 secs and the second at 4 seconds, i.e., where star-eating first kicks in. DSS detected an average of 21,264 stars in the 3.2 second set, but only 12,061 in the 4 second set, a reduction of 43% despite the longer exposure. You’re very welcome to access those images if you want to extend your testing to real world images.

I have not yet seen any test of the A7rIII star eating behavior ( can’t really call it a feature ) in combination with the sensor / pixel shifting feature of the A7rIII.

If I were the engineer designing a feature like that I would be tempted to move hot pixel elimination to the software that combines the 4 exposures.

Have you made any experiments with sensor / pixel shifting ?

I don’t have an a7RIII. Yet.