I’ve been made aware of an objection to the Sony a7RII star-eater workaround that I documented in this post. A poster on Fred Miranda claimed that using continuous mode would result in greater sensor heating (and therefore more read noise) than using single shot mode. That didn’t seem right to me, but things that seemed nearly self-evident have turned out not to be so simple when I started testing, and I thought that could possibly be the case here.

It’s not.

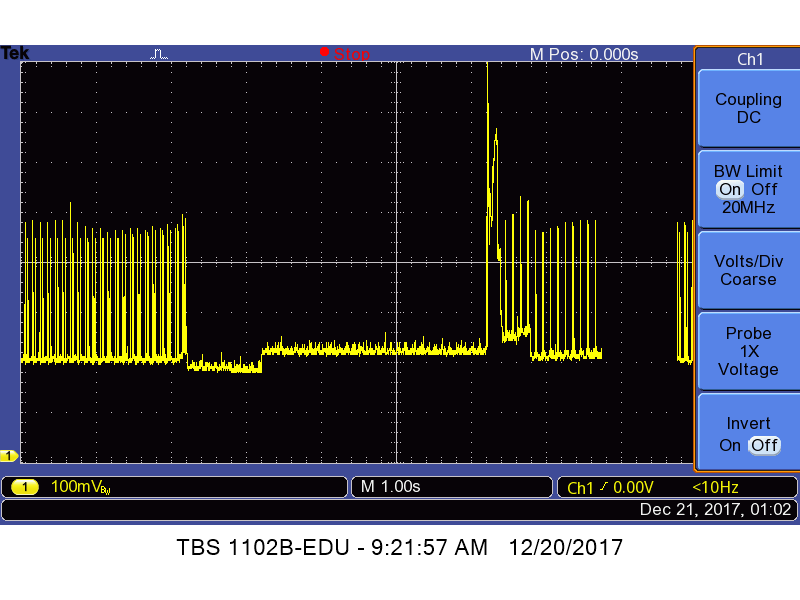

Here is an oscilloscope trace of an a7RII’s battery current versus time when making a 4-second single shot EFCS exposure at ISO 640 in uncompressed raw mode:

No lens was used, just a body cap. The time base is set to 1 second per division. The vertical axis is about 130 milliamps per division. Just after the third division starts, the shutter is tripped. You can see the scanning of the sensor stop then. Current drops for the first second of the exposure then increases slightly for the other three seconds. I’ve no idea why that happens. Then, at the end of the exposure, the current increases abruptly for about 200 milliseconds as the sensor is read, then the scanning resumes.

Average current draw during the higher-current part of the exposure is 330 as measured by a digital milliammeter with 100 milliohms impedance.

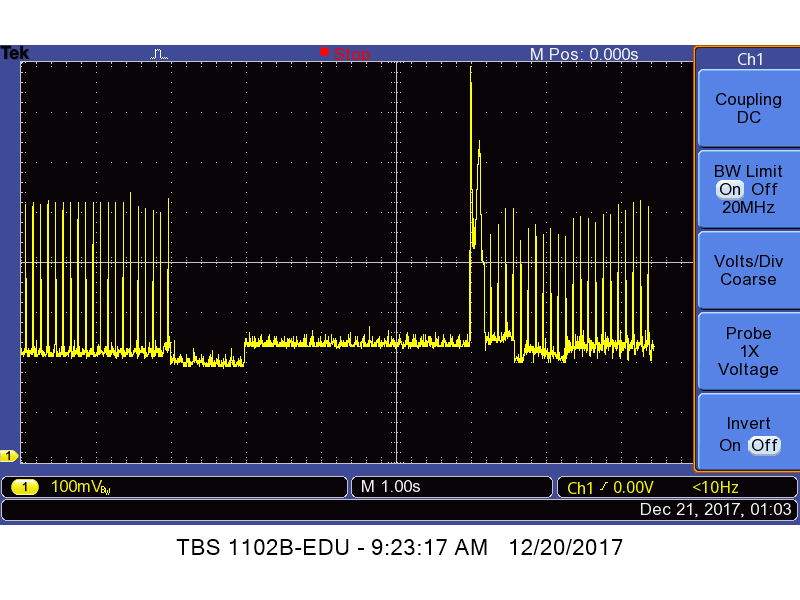

Now we’ll look at an exposure under the same conditions except that the shutter mode is continuous high:

The current draw is essentially the same. The milliammeter says that, too.

This time gut feel wins one.

Great work Jim 🙂 I have to wonder how you got your meter between the battery and the camera, LOL And thanks for finding this A7r II star-eater workaround. I can hardly believe that Sony hasn’t yet provided an off switch in the firmware.

Here’s the set-up, Joe:

http://blog.kasson.com/the-last-word/refining-the-a7rii-battery-current-measurements/