This is one in a series of posts on the Sony alpha 7 R Mark IV (aka a7RIV). You should be able to find all the posts about that camera in the Category List on the right sidebar, below the Articles widget. There’s a drop-down menu there that you can use to get to all the posts in this series; just look for “A7RIV”.

Yesterday, I conducted an experiment to prove or disprove the theory that the a7RIV didn’t resolve as well as the a7RIII, and it indicated that theory was wrong. But there was one problem: I used Lightroom to do the raw development. I equalized the parameters as best I could, but there was the slight change that Lightroom, in it Adobe-knows-best opacity, was treating the cameras differently under the covers.

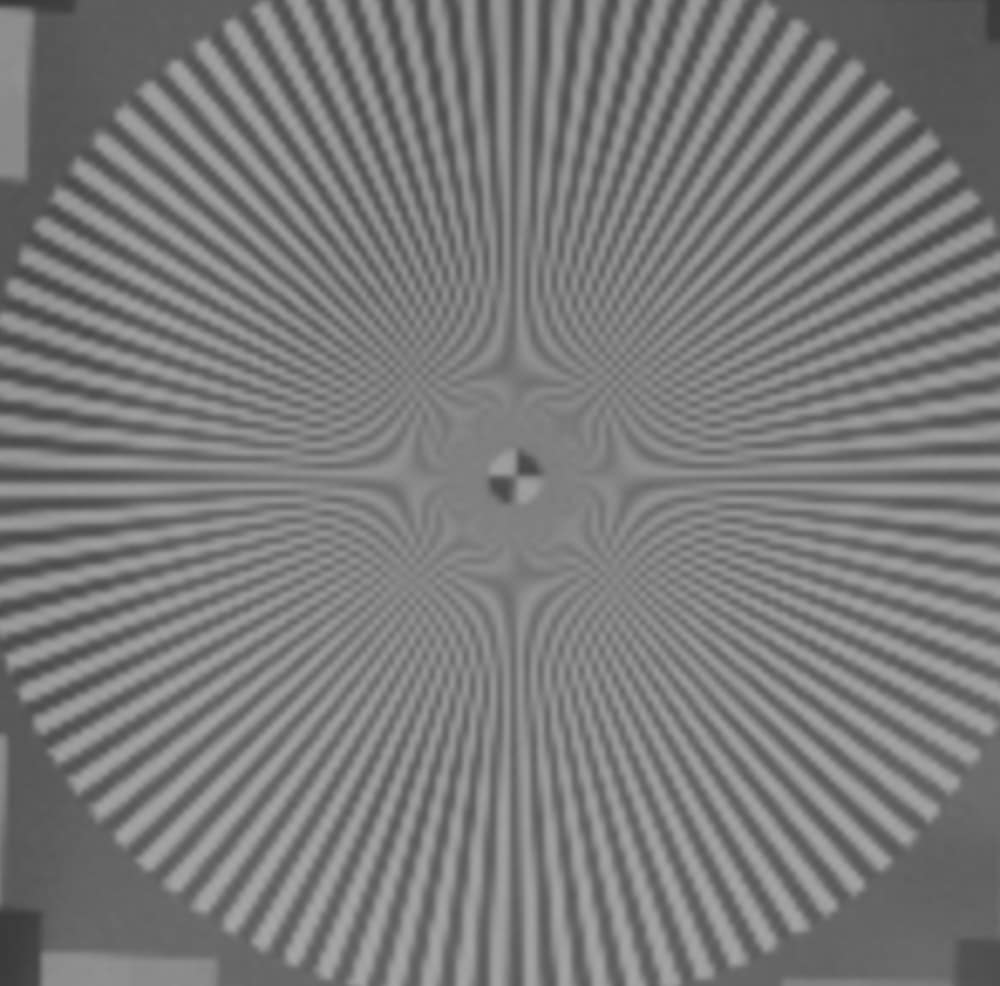

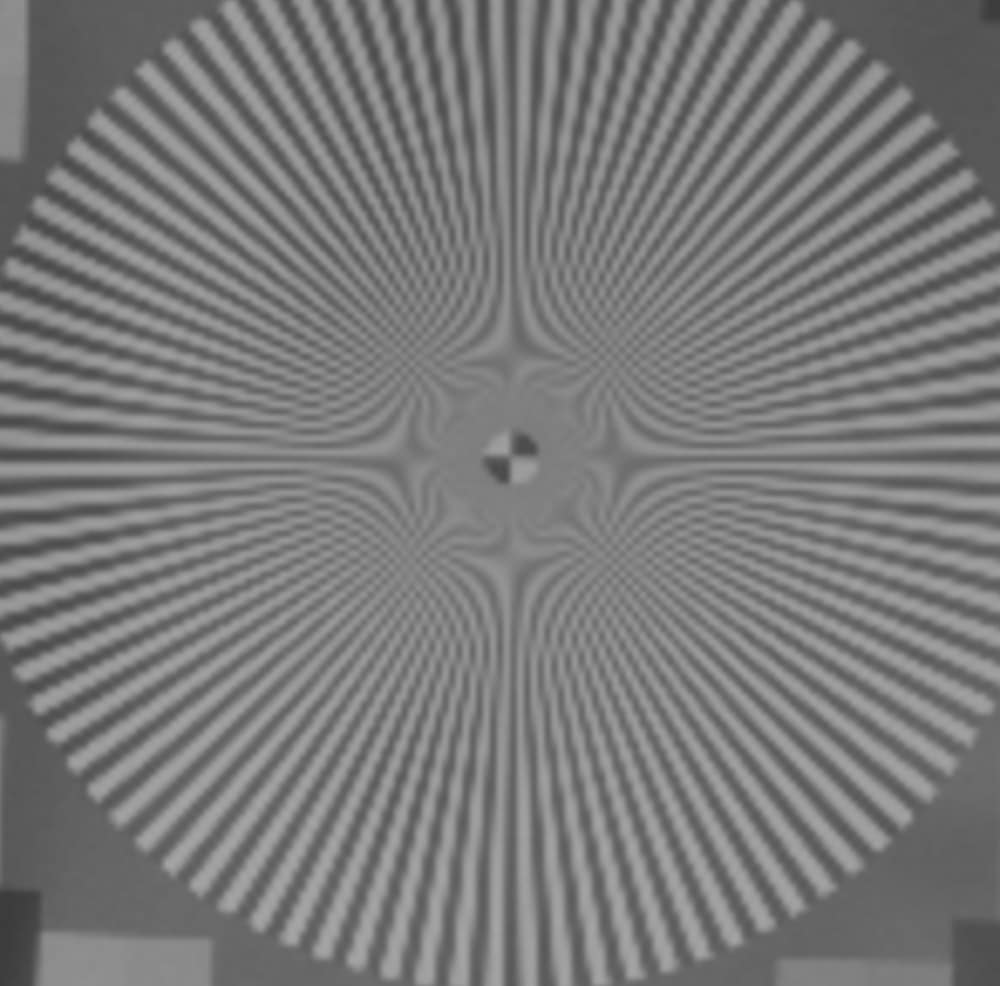

In order to remove that bit of doubt, I took at look at the two green raw layers of the files. They looked the same, so I’ll just show you the G2 layer here (that’s the green pixels in the same rows as the blue ones). These images have not been sharpened at all, so they will look fuzzy. They are also highly magnified to the same field of view for the two cameras.

The a7RIV clearly has higher resolution — it can resolve detail nearer the center of the star — and similar contrast. The lens appears to be laying down high-frequency detail at high contrast well beyond the Nyquist frequency for both sensors.

You might be asking why I chose such a contrived situation to test the hypothesis. Why not just shoot a highly-detailed scene with both cameras and the same lens,develop them the same way, enlarge the images to common-sized large images with some fancy resampler like GigaPixel AI — which did very well in tests I posted here last week — and just eyeball them. The reason is that there are too many uncontrolled variables in that protocol. There will clearly be aliasing in all the samples, but without a target like the Siemens Star, it’s hard to sort out aliased fake detail from real sub-Nyquist detail. Also there’s the issue of unknown differences in the raw processing that I talked about earlier in this post. And finally, we know that GigaPixel Ai makes things up. It makes believable guesses; that’s its utility. But they are guesses just the same. And it’s going to be guessing about what to do about parts of the images that are highly aliased to start out with. Another confounding issue is that the believability of GigaPixel AIs inventions is image-content dependant.

If what we are interested in is the performance of the camera itself, it’s best to strip out everything but the camera from the test, if we can. If we are testing a hypothesis about resolution, it’s best to use a target that removes other image variables from the picture.

And, byt the way, the frequency domain testing I did earlier indicates that Sony is not playing games with the sharpness of the raw files at these shutter speeds.

“Yesterday, I conducted an experiment to prove or disprove the theory that the a7RIV didn’t resolve as well as the a7RIII, and it indicated that theory was wrong”.

— J. K.

Jim, I presume you meant to say “hypothesis” rather than “theory”! Doubly-so since it turned out to be so easily falsifiable! 🙂

I stand corrected.