This is the tenth in a series of posts on color reproduction. The series starts here.

Now that I’ve tested your tolerance for theory, let’s jump right into practice, at the deep end.

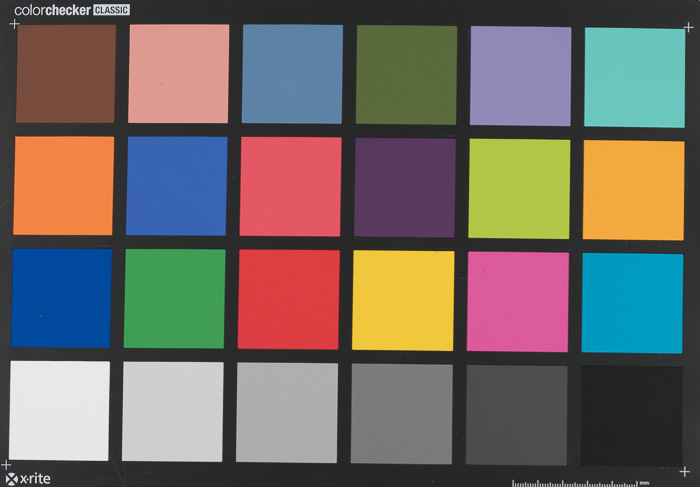

Let’s say I’ve got a Sony a7RII in my hand, and I’d like to know how accurate the colors are when the raw image is developed in Lightroom with Adobe Standard profile and default settings. I also have a Macbeth Classic Color Checker that’s of newish vintage (they do fade, you know).

Since I had both of those things, I decided to do a quick and dirty test.

Here’s what I did.

I velcroed the chart to a convenient door.

I lit it with two 12×12 inch Wescott LED panels that are capable of producing light with color temperatures from 2800K to 6000K. They do this by mixing two kinds of LEDs, so there aren’t really as many independent illuminants as you might think. The panels were about three feet from the target, and at a 45 degree angle to minimize reflections.

I shot the target with a Sony 90mm f/2.8 macro lens at f/5.6 with the camera in A mode with +1 EV exposure compensation.

I made two exposures at each of the following indicated color temperatures: 2800K, 3200K, 4000K, 5000K, 6000K.

I brought all the images into Lightroom, where they looked like this:

This is with the camera’s auto white balance setting, and is with the illuminant set to 4000K.

I cropped the images to the chart, and white balanced each to the third gray square from the left. That made the 4000K image look like this:

I left all the settings at the default, and exported all the images to Photoshop as layers in Pro Photo RGB, with 15+ bits per primary.

In Ps, I applied a 10 pixel Gaussian blur to each layer.

Then I measured the eight-bit PPRGB values, entering them into the Lab version of Bruce Lindbloom’s Color Checker spreadsheet. Here’s what the PPRGB page looked like for the 6000K exposure:

There are lots of things to look at here, and I’ll get to a lot of them in the next few posts. Between now and then, imagine what you’d do to make this test more accurate.

spectrometer… for both light and target, several times, average… no LEDs – but better spectrum… flat field shot… drape in black matte cloth everything around the target (floor, ceiling, sides) just with holes for lens and light and no bright background behind the target (why ‘d you want reflections back into your lens) … average several raw shots … make the target even smaller within the frame, even 90 macro @ 5.6 … consider several different exposures (separated by 1 EV) with proportional adjusting spectal measurments of the target…