For the past few days, I’ve been reporting on the “ISOlessness” of three cameras — the D4, D810, and alpha 7II — using a metric that I devised which, given an ISO range and a shadow level, returns the signal-to-noise ratio (SNR) of that level at the highest ISO in the range and at all the other ISOs assuming the exposure doesn’t change and the images at all the other ISOs are pushed in post.

I thought it might be more useful to turn that notion around: given a SNR that’s the minimum acceptable, how does the exposure required to maintain that SNR increase as the ISO knob is turned down from the highest ISO?

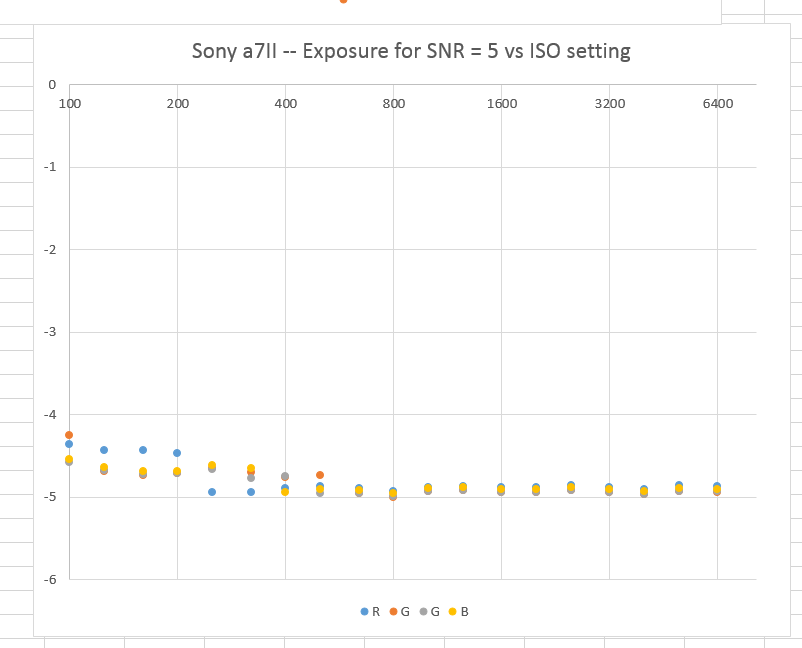

Here are some results for all four raw channels for the alpha 7II at an SNR of 5:

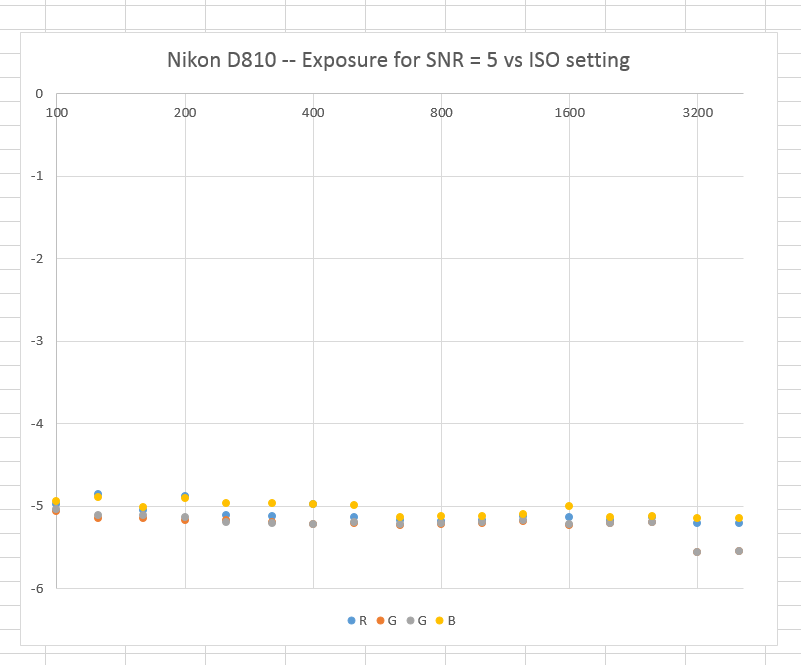

And for the Nikon D810:

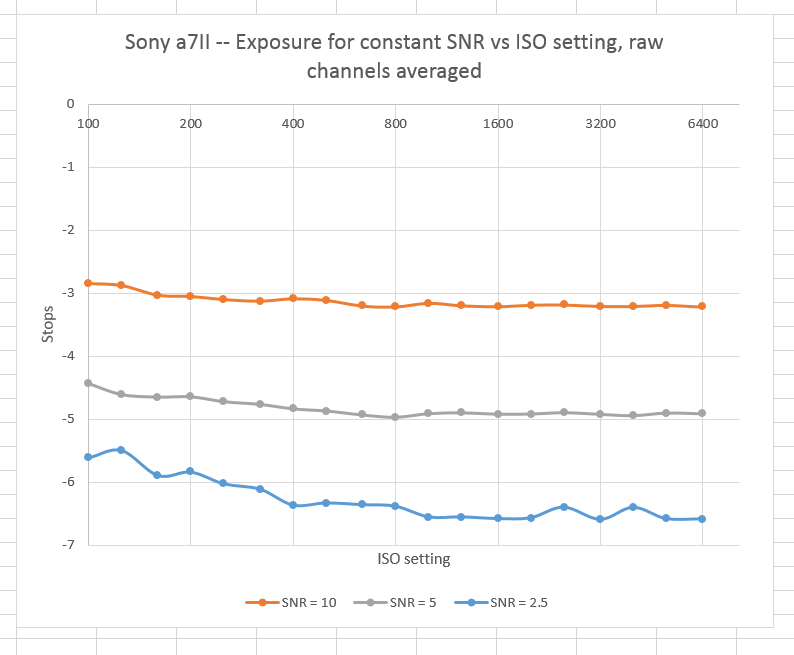

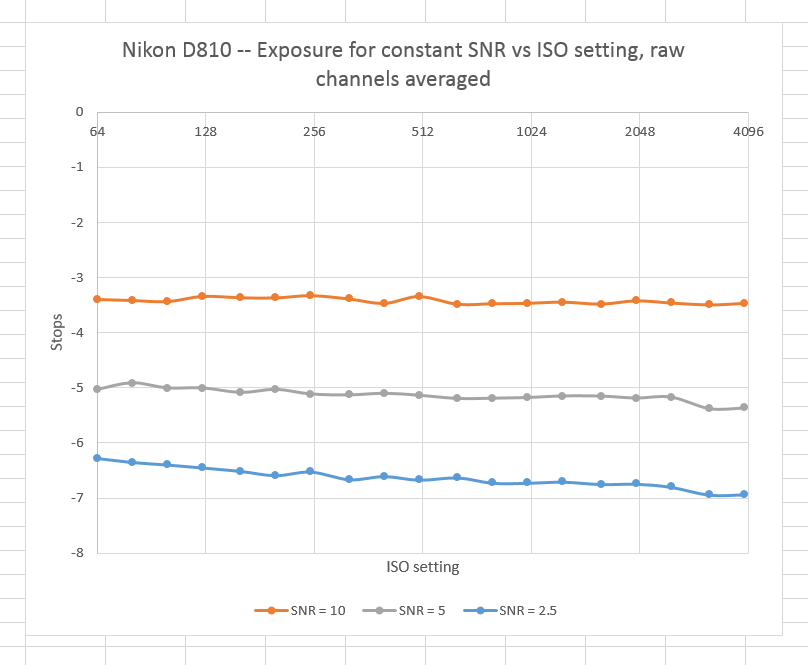

Since there doesn’t appear to be much to be learned from looking at the individual raw channels, we can average the results for each SNR together and present more than one SNR per graph:

Several things are clear from looking at these graphs. First, the D810 is more nearly ISOless than the a7II. Second, if you believe that an SNR of 10 is necessary for good quality, the D810 is essentially ISOless from from 64 through 4000, and the a7II within less than half a stop of being so over ISOs from 100 through 6400.

I didn’t plot the two cameras’ curves on the same graph because it wouldn’t be fair to the Nikon D810, which has greater resolution than the a7II. In order to make comparisons between cameras of different resolution, the target SNRs will have to be adjusted as if all of the images to be compared were ideally converted to a common resolution.

Bear in mind that the curves above are lumpy because you are looking at actual measured values, not modeled ones.

What should we pick for a common resolution, and what value should we pick for a barely-acceptable SNR?

Hmm…

Hi Jim

Many thanks for this interesting comparison and your questions? Hm, what do you mean with barely-acceptable SNR?

My think over that:

1) Your result is from the RAW-file?

2) Your result is from the firmware information?

3) Do you know, that the chip and chipsoftware in the digicam do the job for the raw-file?

4) How you can compare RAW-file information about different raw-file-informationen (chipsoftware)?

5) Do you know, how the expeed (for Nikon) and bionx (for Sony) works?

6) Do you know, the philosophy of Nikon and Sony for RAW-Data?

6) Do you know, if the firmware is written by the same company? Maybe Tessera?

7) And least, how does the lens affect the raw-data? Also, about SNR?

These are my thought about SNR and comparison.

Merry christmas a Happy New Year

Jean Pierre

That’s a lot to deal with. But those are questions that deserve consideration. Give me a week or two. I will get to this,

Jim