In yesterday’s post, we saw markedly worse low-signal-level performance when the camera shutter mode is changed from single shot to continuous. We know from looking at histograms that the analog to digital converter (ADC) resolution changes from 13 to 12 bits when the shutter mode is thus changed. Is that sufficient to cause the observed change in the photon transfer curves? I wrote a simulator in Matlab to explore the issue.

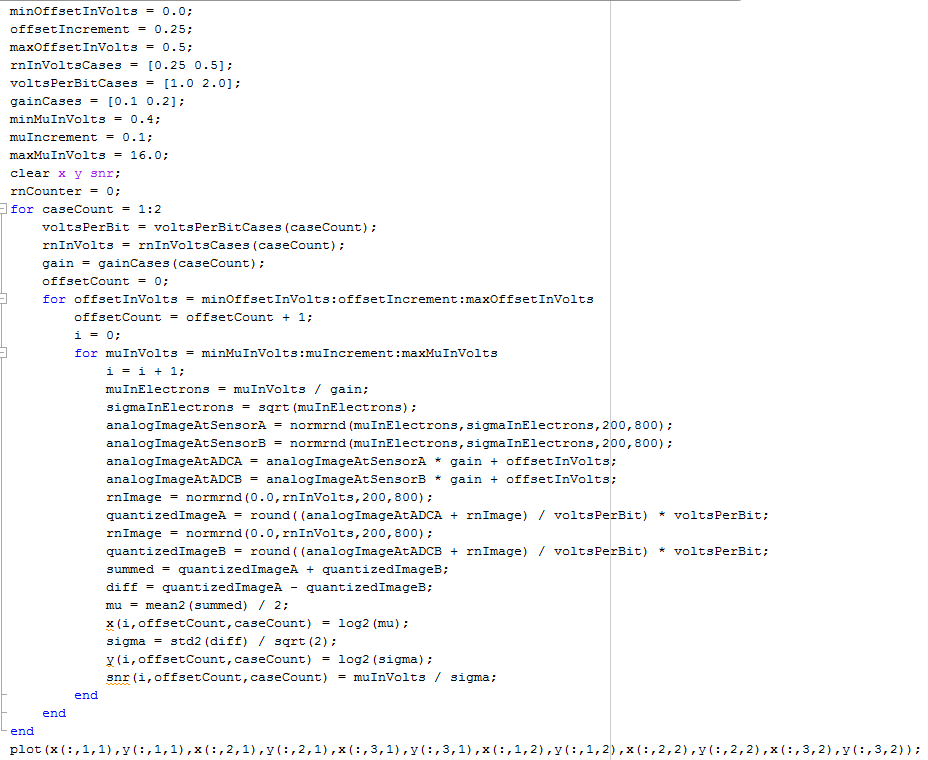

Here’s the code which simulates the paired capture, mean and standard deviation calculations that the photon transfer curve software that I wrote with Jack Hogan does:

Matlab users, contact me if you want the text file.

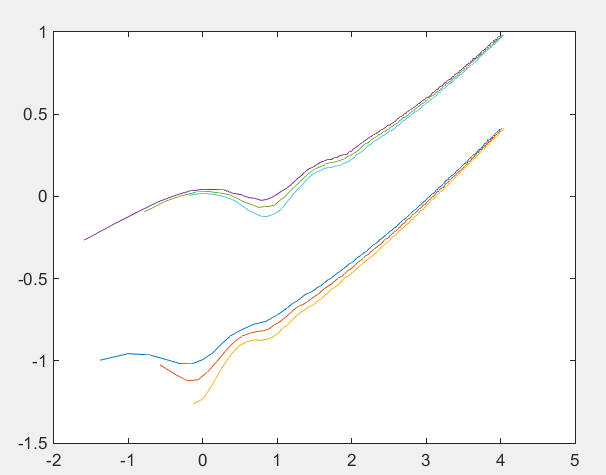

Here’s what we get if we plot three offset voltages for two main cases where the ADCs differ in resolution by one bit, the read noise by a factor of two (the higher precision ADC gets the lower RN), and the gain by a factor of two (the lower precision ADC gets the higher gain):

The horizontal axis is the calculated (not the actual) mean in stops above the higher-precision ADC’s LSB, and the vertical axis is the calculated standard deviation with the same log scale. The curves have similar shapes and bear similarity to the real curves you saq yesterday, but the higher precision, lower noise, lower gain case has lower standard deviations. No surprises here.

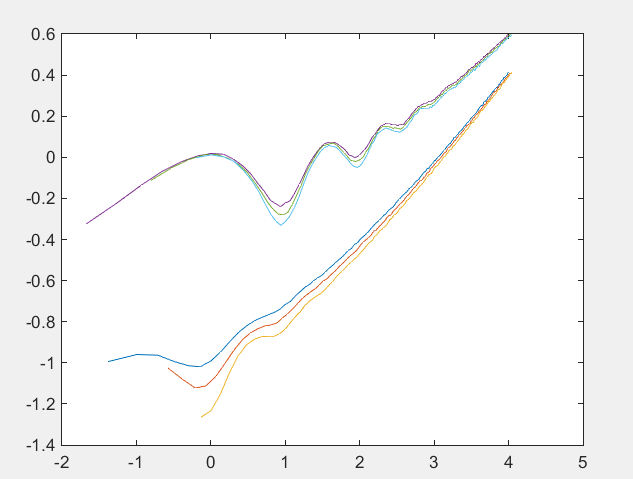

Now lets leave the gain the same between the two sets of curves, as you would expect that Sony would do if they had to lower the precision to get the data out faster:

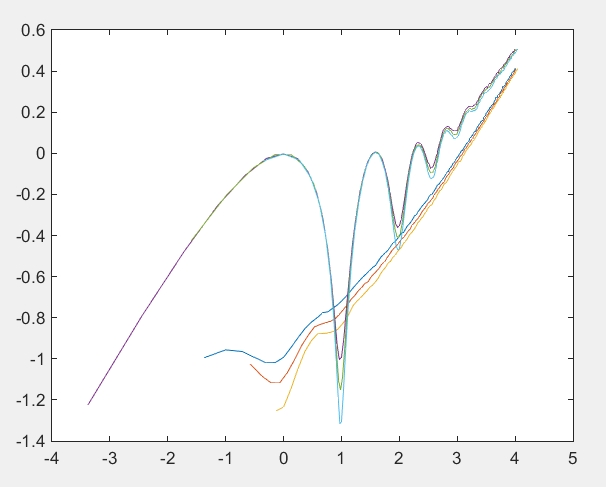

Now let’s make the read noise the same in both sets of curves:

My take is, looking at these curves and the ones from yesterday’s post, is that we are seeing a real increase in read noise as the shutter mode is changed to continuous, not just an increase in quantization noise, but perhaps short of a doubling of non-quantizing read noise.

Bill Claff suggested to me yesterday that that could be the case. My money was on mostly quantizing noise. Looks like I was wrong.

On the theory that authors want to hear all reactions to their writing, I’ll admit to you that I keep hoping that someday soon you will switch from Matlab to R. You might like R better, and many people have already switched to R. If not, it looks like I’ll be learning Matlab when I have more time .

Gee, Jerry, at my advanced age it was hard enough going from Smalltalk to Matlab…