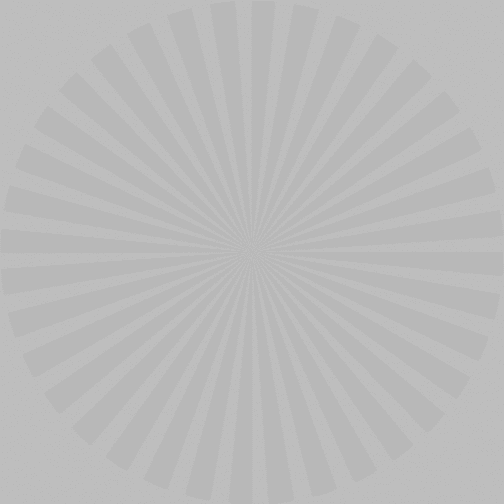

A few days ago, I made this post about the visual detectability of information with a signal to noise ratio (SNR) of less than one. If you don’t remember it you should at least go back and skim it, or this post won’t make much sense to you.

It occurs to me that the normalization that I used in the preceding post, which was to the combined signal and noise image, wasn’t really fair, since as the signal went down, it became less visible just because of the normalization, even without the effect of the noise. I decided to go back and run the program again, but this time normalizing the output image so that the signal level stays the same. This means that and the signal to noise ratio goes down, there is more clipping of the noise.

Autonomous vehicles will have to read signs at night with very poor illumination (low SNR…). So this is important stuff.