I have long argued that the best way to judge the exposure of a raw file is by looking at the raw data, so the title of this post should come as no surprise. However, I have recently been looking at a raw file that demonstrates this to an extent that surprised me, and I thought I’d share it with you all. It’s not my image, so I won’t show it to you. It appears to be a sunrise or sunset.

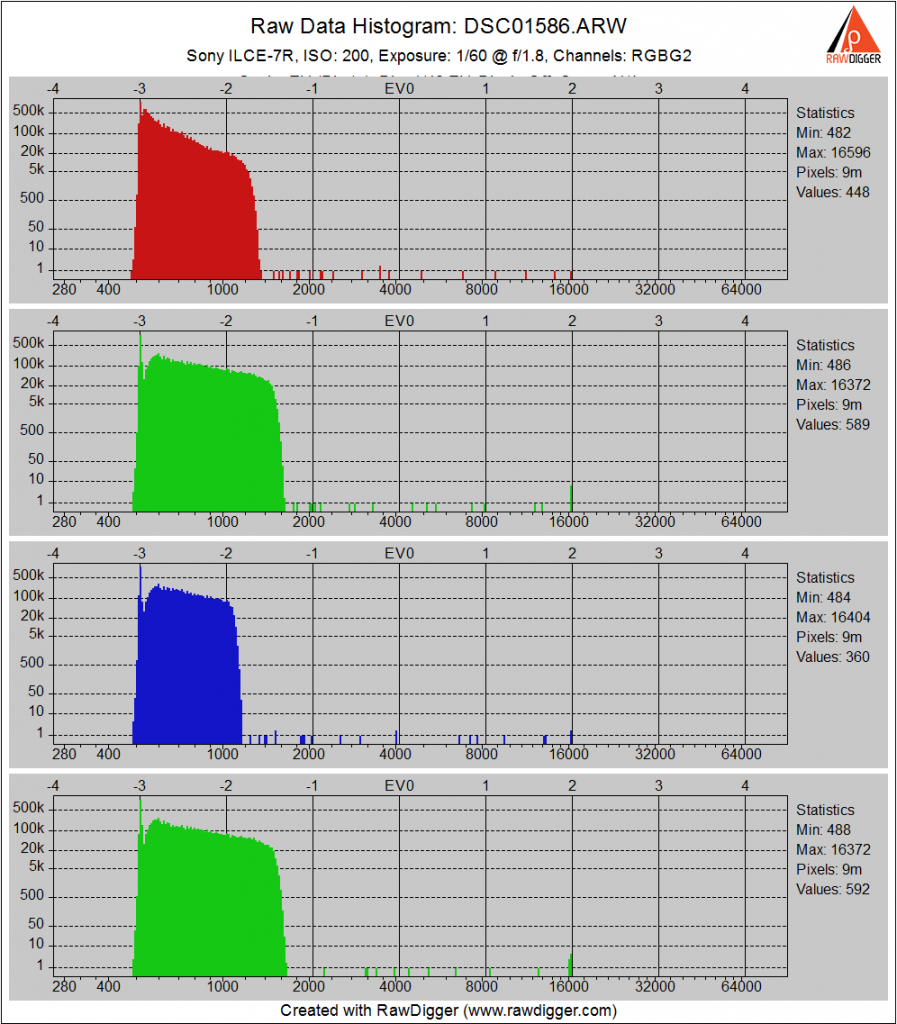

Here’s what the raw histogram looks like:

The horizontal axis is the raw count, or data number. Full scale on the Sony a7R, which was the camera used, is about 16000 counts. The black point is 512.

Looking at it with a logarithmic vertical axis:

You can see that, except for isolated single-pixel buckets from outliers on the sensor, that there is no data above a count of about 1500 in the red and blue channels, and above about 1700 in the green channels. Subtracting the black point leaves us with about 600 in the red and blue and about 1200 in the green. That means, in ETTR terms, that the image is almost 4 stops underexposed.

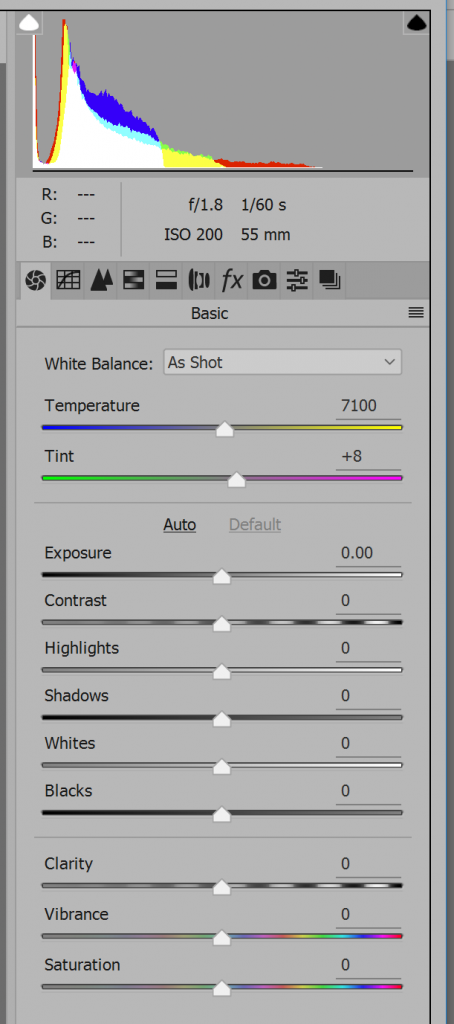

Bringing the image into the current version of Adobe Camera Raw (9.9.0.718) with the controls at default gets us this:

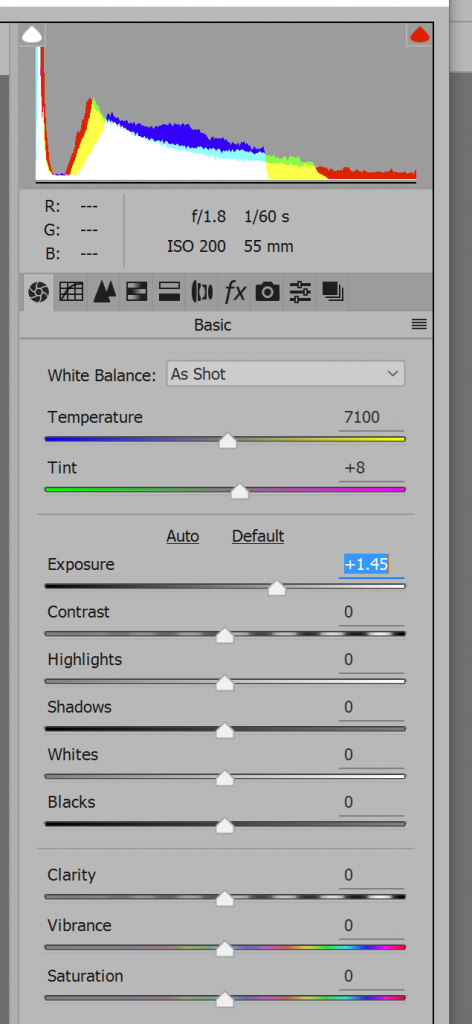

It looks underexposed. Is it more than three stops under? Pushing up the Exposure control until the clipping warning lights up:

It now looks like it is less than one and a half stops underexposed.

There is more than a two stop difference between how you’d judge the exposure using ACR and the real raw data. Lr uses the same engine as ACR, and would thus give the same results. Looking at Lr and ACR usually makes you think a file is more heavily exposed than it is, but not always. There is no substitute for looking at the raw data.

If you look at the ACR data and weren’t thinking clearly, you might think the red raw channel would be first to clip if you increased the exposure, since it is the first channel to clip after conversion to the ProPhoto RGB primaries that ACR uses. If you look at the raw data, you can see that the green channels would be the first ones to clip.

small typo: “but now always”

Fixed. Thanks.

Good example Jim. With regards to channel clipping comparisons, one should probably look at both after white balance.

Jack

Thanks. I’m not sure what difference it would make to look at the raw data after WB. Clipped is clipped. If the data is clipped before WB, nothing short of making up data can save the highlights. If it’s not clipped before WB, then presumably there is some raw editing process that can keep the converted image from clipping.

Right, input vs output referred. I was referring to the last paragraph of the post, thinking that in most advanced digital cameras today the daylight multipliers are typically something like 2 1 1.3 for the RGB channels respectively. So even though the green channel will reach full scale first in the raw data and the other two may appear to have room to spare, it is sometimes the case that the other two channels will be propelled beyond full scale once the multipliers are applied and the image is prepped for viewing. The procedure is then to clip them all to full scale. In other words Red may not be first to clip in the raw data but it could be first to clip when viewing the displayed image, as LR is indicating.

So here is the philosophical question: wouldn’t the best exposure be the one that ensures that none of the channels are clipped (does not matter whether in raw/PCS/output color space assuming a linear process)? This is a new realization for me but perhaps a completely raw histogram is not the best indicator of ideal exposure after all.

Jack

Jack, I think that a raw processor that, when presented with a raw file which had no clipping, produced an output RGB image which clipped, regardless of the user’s settings, would be a pretty poor excuse for a raw processor. If I designed a raw processor, I’d make sure that intermediate calculations were performed with sufficient precision and scaling that no clipping could occur, either at fullscale or at zero. In Matlab I use double precision floating point most of the time so I don’t have to think about overflow or underflow in the intermediate calcs. That may be too computationally expensive for general use, but I understand that Lr uses 32 bit integer precision for most calcs, and It would be possible to design an integer representation that didn’t clip at zero — heck, maybe they could just use 32 bit signed.

I am not thinking of precision Jim. If the green channel is at full scale in the raw data but the other two are not you are possibly going to have incomplete information in the highlights above full scale after white balance. Normalization will not help there and you may get weird tints in your highlights. To get rid of the tint you need to either reconstruct or clip all channels at full scale.

Alternatively to capture full information you would need to back off exposure so that the white balanced red raw channel is TTR (following the example above). So rather than looking at the raw histogram perhaps it would be better to look at the histogram after white balancing the raw data. Esoteric, I know.

Jack

I’m suggesting that the internal full scale of the raw converter can be well above unity, so that there’s no clipping no matter what happens with the white balance. Of course, when it comes time to do the rendering to RGB, there will be clipping unless the user intervenes. One intervention is to back off the “exposure” control in the raw developer. My thinking is, done right, that will produce less noise in the final image than backing of the actual in-camera exposure settings, because you’ll get more photons on the sensor.

I think when Iliah was talking about normalization, he was talking about some operation that scales all the RGB channels by dividing in linear representation all channel values by the value of the highest pixel in any color plane. That scales the image so that there is no clipping (assuming full scale is unity). By the way, that’s exactly what I do when I make the synthetic slit scans in Matlab.

Jim

Yes, that’s how you do it, divide by largest after white balance. No problem when in floating point.

Yes. So you normalize (ETTR) after white balance (let’s forget about possible weird tints in the highlights for now). As an aid to a photographer choosing Exposure, what would be more representative of this situation: the raw histogram or a histogram of something close to a Flat/Neutral Jpeg OOC (LR histogram above)?

For the purpose of this exercise assume all advanced corrections are off and the camera uses just a color matrix to transform white balanced raw data to Adobe RGB (keeping the transformation linear, gamma not changing effective full scale).

Jack

The way I look at it, the best exposure is the one that gets the most photons on the sensor short of clipping a raw channel. That gives the best SNR and is the best starting place for the raw converter. If the raw converter needs to do some normalization, at least it will be doing that with the highest-SNR data.

Jim

WRT highlight tints, normalization as I’ve described in a linear CIE-traceable color space introduces no such shifts. Its the same as turning down the illumination on a non-self-luminous scene.

Good point on SNR, I ‘d somehow forgotten about that. So I am back to believing that raw histograms are best for ETTR even though they may not give the photographer a correct indication of what channel is actually closest to clipping after white balance.

The tints appear when one (or more) of the channels are clipped in the raw data before white balance, introducing a nonlinearity in the system. But I’ll leave that discussion for another time.

Jack

Right. I thought you were saying that normalization produced tints. I’m not advocating clipping the raw channels, just pushing the idea of not worrying about what gonna happen with WB and the rest of it when judging exposure.

Jim

White balance, normalize instead of clipping, apply a curve for midtone placement and highlights compression. But that’s slow, and may be even humiliating.

Yeah, and that’s practically 90% of the way to a ‘neutral/flat’ Jpeg.

I’m intrigued by the data and interpretation you present here. I have some questions that may reflect misunderstandings on my part.

1. In the data presented why is the black clipping point 512 rather than 0?

2. With 512 rather than 0 as the black point and white clipping at 16000, the dynamic range for these raw data is only five stops.This sounds too small to me. What I am misunderstanding here?

3. To my eye the ETTR clipping would happen in the green channels with about 3.3 stops of additional exposure. Is that what you meant by “almost 4 stops”?

4. Following up on Jack Hogan’s comment, the ACR histogram suggests that the gain on the red channel was set considerable higher than that of the blue and green channels to render the demosaiced RGB image’s white balance “as shot.” If this white balance is what the photographer intended, then indeed the red channel would clip first in the image rendered as the photographer intended. Right?

5. I think that the real issue here is not what ETTR means. Indeed the green channels will clip first. But to see this in the rendered-image histogram, we would always have to be looking at the histogram of the “native” white balance of the sensor. In this case that would look much too cyan on the back of the camera and on the computer screen. Is this what you’d really want to see while evaluating your exposure?

>1. In the data presented why is the black clipping point 512 rather than 0?

The blacks are not clipped at 512. The black point for the camera is 512. That’s the way it’s designed. Rather than subtract the black point in the camera, it reports the black point to the raw developer, which can then subtract it, before or after some processing. That, to me, is a much more sensible way to do things than in-camera subtraction.

>2. With 512 rather than 0 as the black point and white clipping at 16000, the dynamic range for these raw data is only five stops.This sounds too small to me. What I am misunderstanding here?

Subtract 512 from all the values. Then you’ll see that the value 513 is about 14 stops down from full scale. 512 is, of course, an infinite number of stops below full scale.

>3. To my eye the ETTR clipping would happen in the green channels with about 3.3 stops of additional exposure. Is that what you meant by “almost 4 stops”?

Four stops would be about 1000 after black pint subtraction. 1200 is a lottle more than 1000.

>4. Following up on Jack Hogan’s comment, the ACR histogram suggests that the gain on the red channel was set considerable higher than that of the blue and green channels to render the demosaiced RGB image’s white balance “as shot.” If this white balance is what the photographer intended, then indeed the red channel would clip first in the image rendered as the photographer intended. Right?

Camera sensor space is not a color space, and should be thought of differently than a CIE-traceable color space. I see that I have more to do to explain this well. Give me some time, please.

>5. I think that the real issue here is not what ETTR means. Indeed the green channels will clip first. But to see this in the rendered-image histogram, we would always have to be looking at the histogram of the “native” white balance of the sensor. In this case that would look much too cyan on the back of the camera and on the computer screen. Is this what you’d really want to see while evaluating your exposure?

From an exposure point of view, from an ETTR perspective, all that matters is the raw values. The idea is to not clip information that the raw processor has to work with.

Jim

Interesting article … thanks.

I’m unclear how to incorporate this info into a “best practice workflow”, particularly for an underexposed capture with an underlying wide dynamic range.

My speculation would be perhaps to have RawDigger on one monitor of a dual monitor setup, and ACR/LR on the other (I use ACR for edits, LR for printing).

Then determine a part of the capture you want to just retain non-clipped values, and put an ACR “Color Sampler” at that point? Then increase one or several ACR sliders to get this Sampler to just under clipping … Exposure, Highlights, Whites?

But … can you believe the ACR Sampler values? Or also use RawDigger’s ability to zoom into a small part of the capture, and increase the appropriate ACR sliders based on what RawDigger indicates?

This is old post but I’m new to rawdigger and realising that lightroom does not show any clipping warning for the green channel – it needs another channel to also clip before you get a clipped warning.

This does not happen in Capture One.

As far as I can remember, C1 won’t tell you when a raw channel is clipped, either. It will tell you when a developed channel is clipped.

just to clarify in C1 with clipping warning enabled it shows clipped areas pretty much the same as I am seeing in Rawdigger. In LR there is no clipping warning showing until you push UP the exposure enough to clip more than just the green. CH.