Inspired by the last three posts, I’m starting a series of posts on spatial considerations in color vision, which an emphasis on what’s important to photographers. We’ll get started today with an illustration:

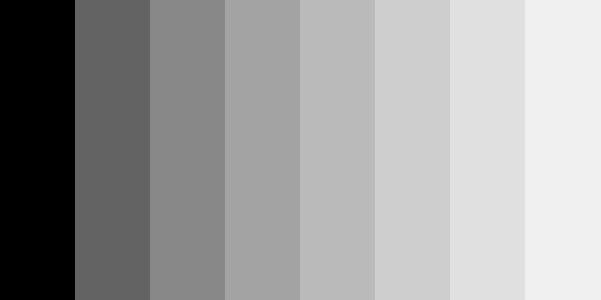

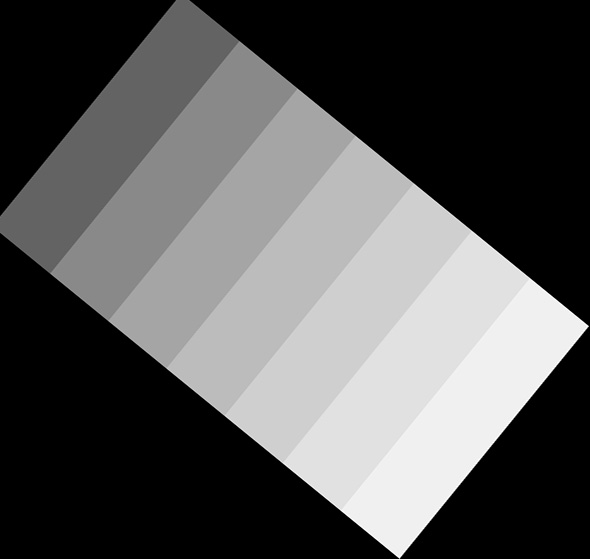

Take good look at this image from a normal viewing distance:

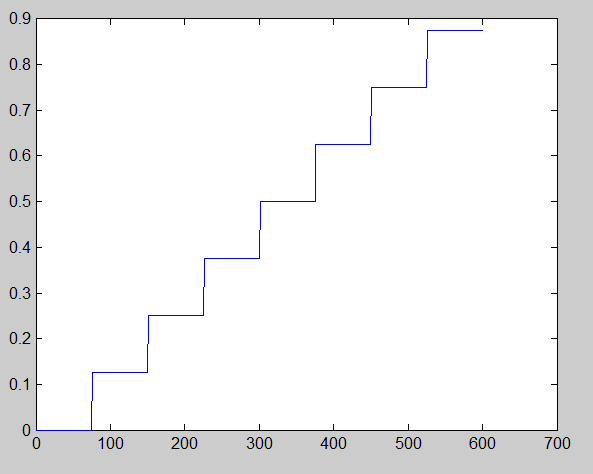

The bars are even steps along the grey axis in a linear RGB color space, so if you plotted the linear values as a graph with full scale being 1.0 and the column index increasing from left to right, here’s what you’d see:

But that’s not what it looks like, is it? It looks more like the intensities go like this:

Get up real close to your screen and look at the step wedge above. You can still see the overshoot that’s not really there, right? Now back way up and look at it from across the room. Unless you’ve got a pretty big room, you can still see the phantom overshoots.

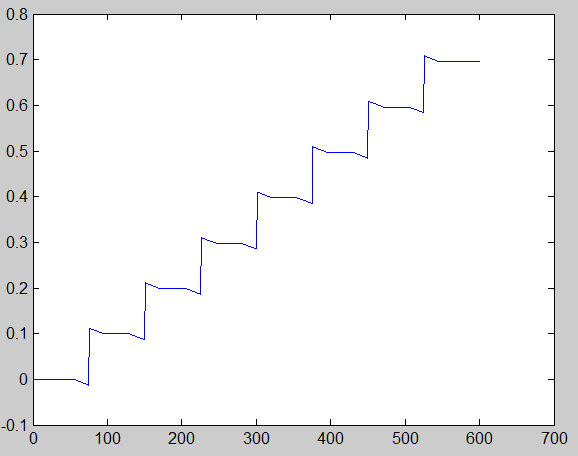

But if we take an image like this, which is just a smaller version of the step wedge, and you back up from it quite a ways, it looks like a smooth gradient:

The overshoots that aren’t really there are called Mach Bands, after Ernst Mach, who died about a hundred years ago, and also gave us the speed of sound as a unit of velocity measurement .

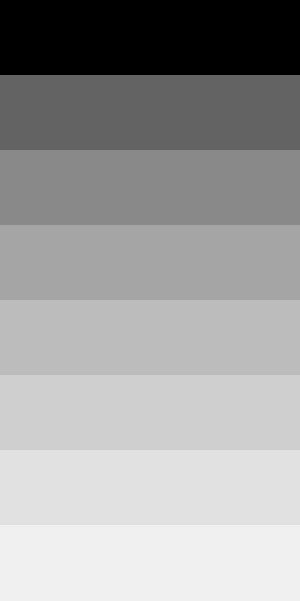

Mach bands are surprisingly robust. You can’t think them away by reminding yourself that they’re not real. They look the same if you rotate the step wedge by 90 degrees:

Or some arbitrary angle:

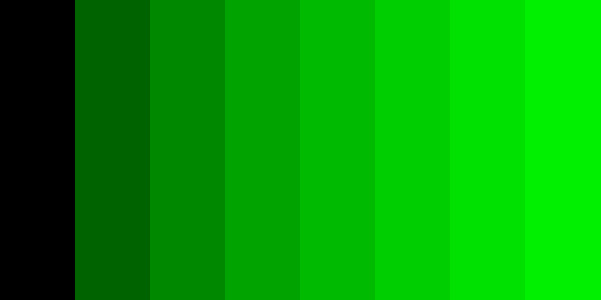

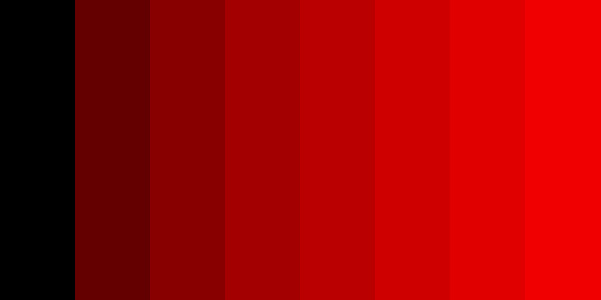

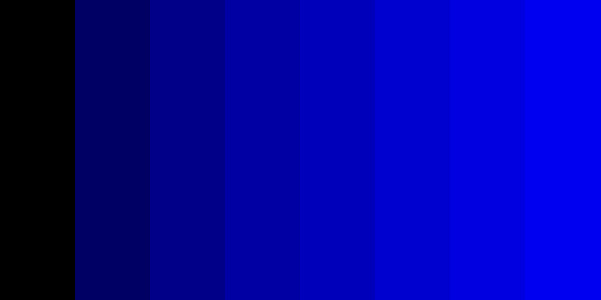

The phenomenon is independent of color (but harder to see in the blue image, given the relative paucity of short (aka blue) cone cells):

That’s our first lesson: the human vision system produces its internal definition of brightness by spatially differentiating light intensity for large features, and integrating light intensity for small features.

Neat! Mach Banding has practical applications in design, and more importantly, could also be attributed to some false diagnostics in medical imaging. I wrote a Mac app to help identify the issue with an image histogram to show the actual light properties vs perception. I’d like to know what you thought! Thanks for the article!