Today we’re back to simulated images of the ISO 12233 target. Here are the camera parameters:

- Perfect lens

- 64-bit floating point resolution

- Target resolution: 10000×6667 pixels

- Target size in camera pixels: 1500×1000

- No photon noise

- No read noise

- No pixel response non-uniformity

- 100% fill factor

- No focus error

- Bayer pattern color filter array: RGGB

- No anti-aliasing filter

- Demosaiced in Matlab with bilinear interpolation

Here’s the topic for the day, for which I hope to gain some insight with the camera simulator. I’ve noticed that in the real camera images of the ISO 12233 target, and in the simulated images that we’ve seen as well, that there are often large changes to the character of the false-color regions of the image. These false colors are generated by the interaction of the target image projected on the sensor, the Bayer pattern in the color filter array, the sensels, and the demosaicing operation in the raw processor. They are a form of aliasing, though not the classical one.

In examining the images of the ISO 12233 target, the differences in the false-color patterns is the first thing you notice. I have seen people doing testing ascribing significance to these patterns, and I have been tempted to do it myself. But can the false-color patterns offer any insight into the sharpness of an image-capturing system? I doubted it, and I set out to create an experiment to test my thoughts.

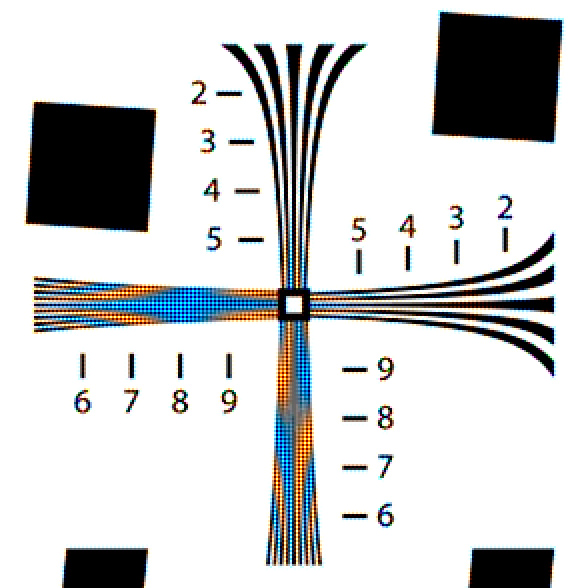

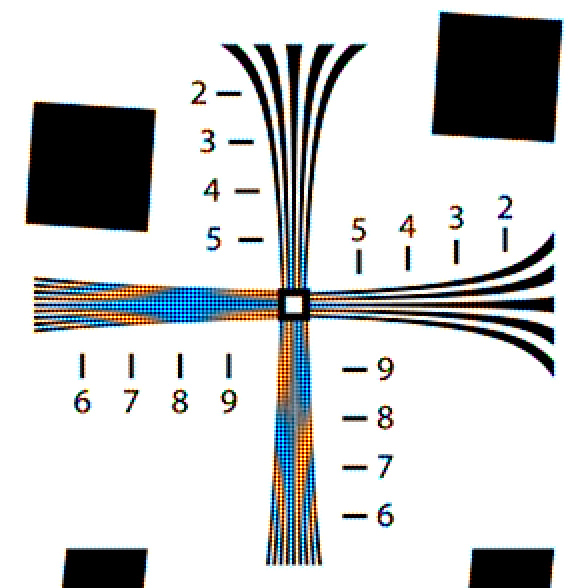

I created this image (a crop of the ISO 12233 target to the region that we’ve been concentrating on, magnified 2x using nearest neighbor so that the details will hold up under JPEG compression) with the camera parameters as above:

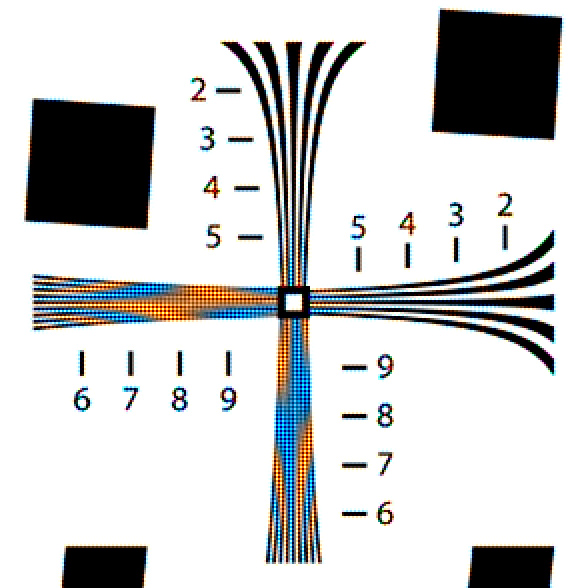

Then I displaced the target image by two camera pixels horizontally and vertically, and ran the sim again:

Quite a difference.

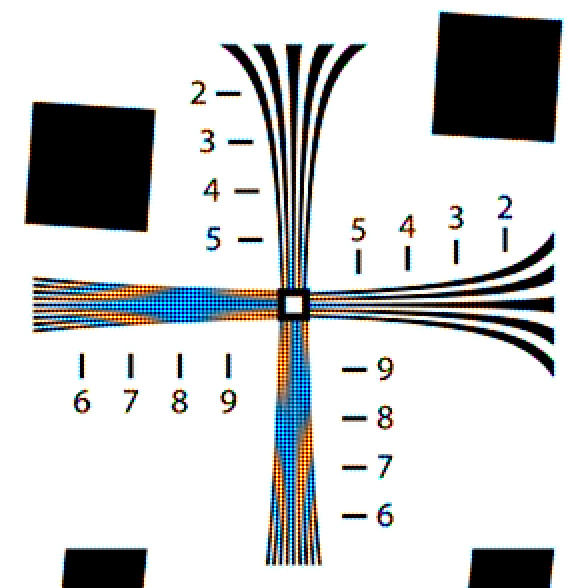

A two camera-pixel horizontal displacement only affects the vertical lines:

A one-camera-pixel horizontal displacement shows large changes:

Even a half-camera-pixel horizontal displacement shows changes:

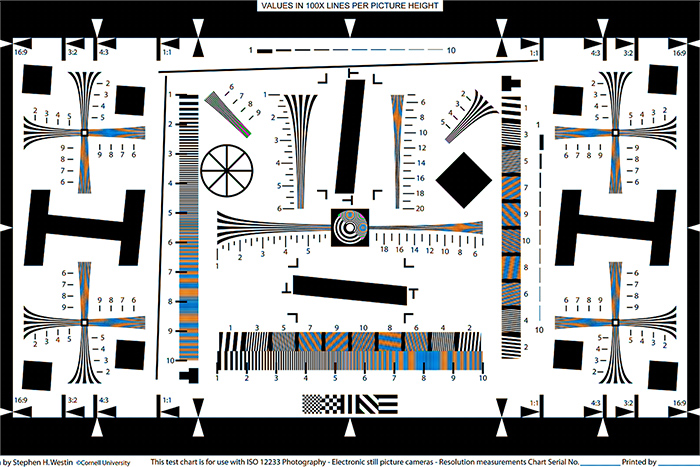

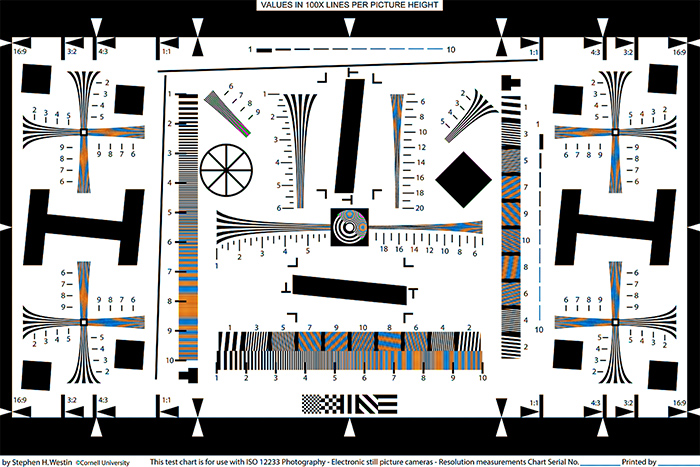

You don’t have to pixel-peek to see the differences in the false-color patterns caused by displacements. Here’s the whole target with no displacement:

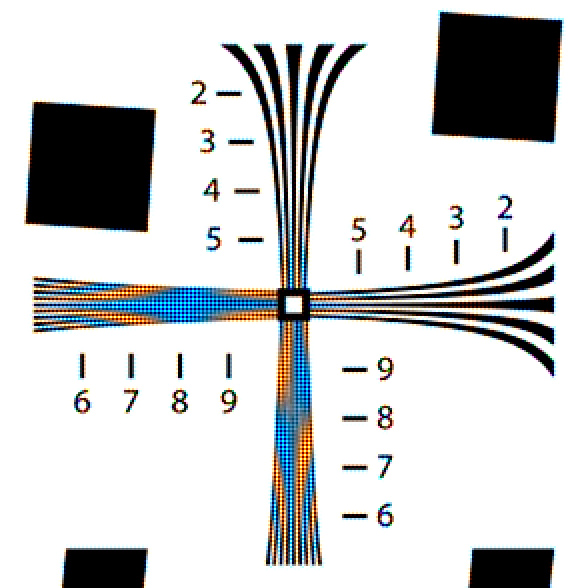

And with two pixels both vertically and horizontally:

There is some aliasing caused by res-ing down the 1500 pixel wide image to 700 pixels wide for the web, but the differences are striking.

Since displacement does not affect sharpness, and does affect the false-color patterns, my conclusion is that we can’t learn anything about sharpness by the nature of the false color-patterns. That’s not to say that their presence or absence is unimportant, or even their strength. But seeing one false-color pattern in one image and another false-color pattern in another says nothing about relative sharpness.

Are there one, two, or even more pixel shifts in real images with tripod mounted cameras? You betcha. If you touch a 36 megapixel camera on a tripod, even with a short lens, you will see a shift in the next image. You haven’t seen them in the images I’ve posted before this post (although you can in the images above), because I’ve corrected for them, but they were there.

Leave a Reply