Yesterday we saw that the Sony a7RII long exposure noise reduction (LENR, aka dark-frame subtraction) is applied at shutter speeds so short that it hurts the engineering dynamic range of the image. That experiment was performed at ISO 3200.

It just didn’t seem right that the Sony engineers would do something like that. To be fair, the right time to introduce LENR is a function of sensor temperature and ISO setting. I have only indirect control over the sensor temperature, so I thought to do a set of dark field images at ISO 100.

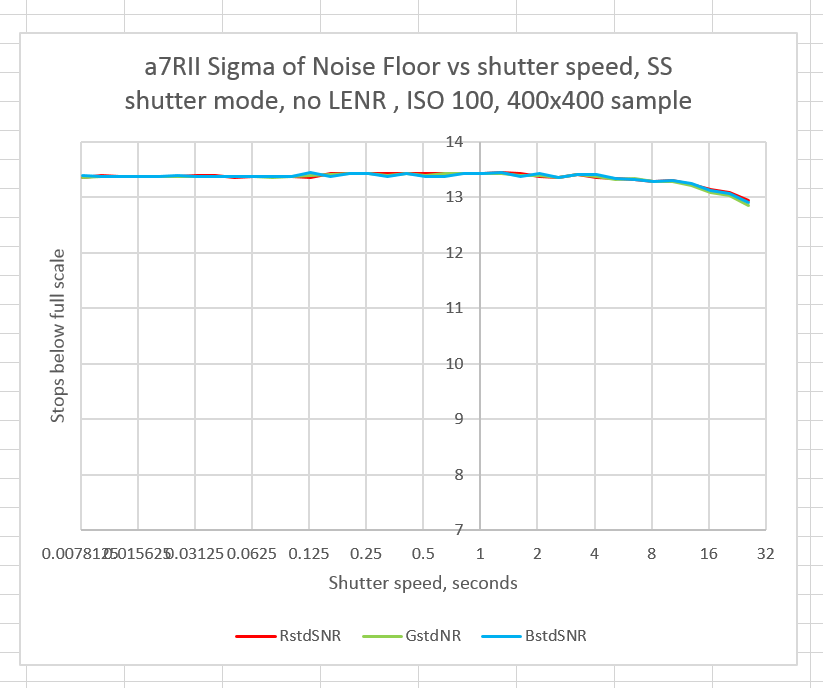

First, with no LENR:

Here’s how to interpret the graph. The horizontal axis is exposure time in seconds, with 1/125 second at the leftmost point, and 30 seconds (more or less) as the rightmost part of the graph. It’s a log scale, with each vertical line one stop apart. The vertical axis is the standard deviation of a 400×400 pixel central sample. This corresponds to the root-mean-square value of the noise, which is the most widely accepted, but not the only measure of noise. This is a fairly easy measurement to make in RawDigger if the camera does not subtract the black level before writing the raw file, which is the case with the a7x cameras. You need to tell RawDigger not to subtract off the black level, though.

The vertical axis is also a log scale, and shows how many stops the noise level is from saturation of the sensor/ADC system. Since less noise is better, you want the noise level as many stops below full scale as possible. Thus, values of the lines towards the top of the graph are better.

It is apparent that, at ISO 100, the a7RII doesn’t really need LENR. Let’s see what happens when you turn it on.

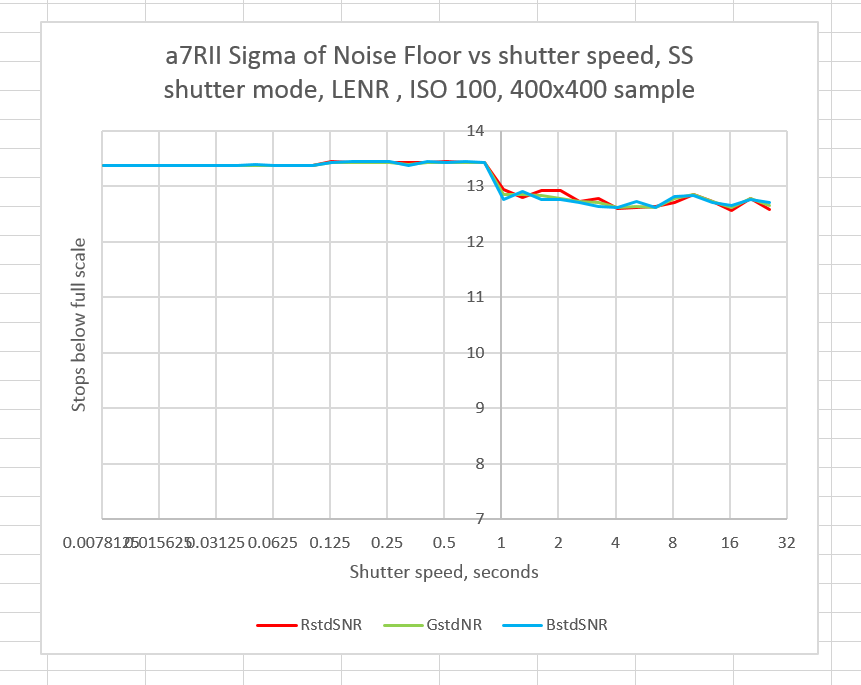

The LENR noise levels are in all cases with shutter speeds of one second and longer worse than in the non-LENR case. Nothing but bad can come from selecting the LENR at ISO 100 in the Sony a7RII.

Some caveats are in order. This applies at an ambient temperature of about 25 degrees C, with little self heating. It applies to one sample. And it applies if the noise metric is the standard deviation of the noise. Noise metrics that weight outliers more heavily might favor LENR.

A reader, in a comment to this post, raised the issue that the area that I selected for the graphing might not have any hot pixels, and therefor not get the benefit of the issue that LENR was created to fix, and suffer the damage of a half stop more uncorrelated noise from the dark frame subtraction, plus the coarser precision that Sony uses when LENR is used.

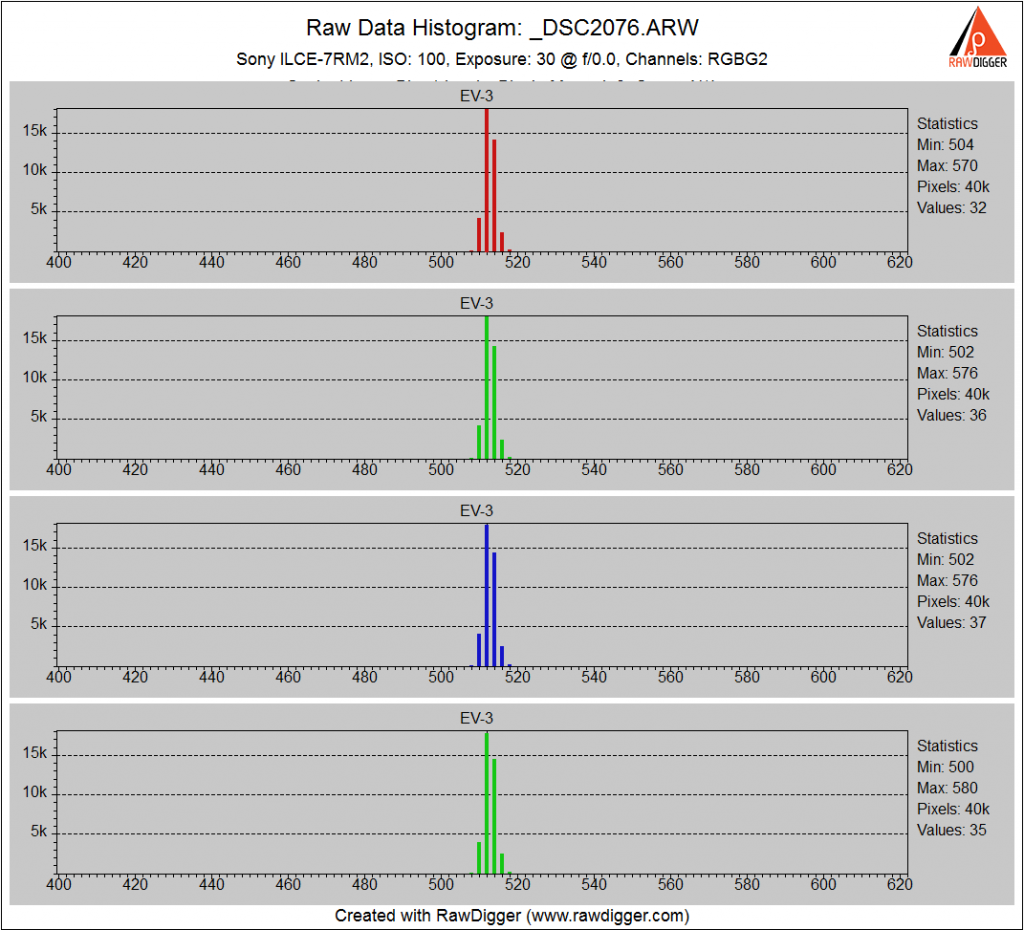

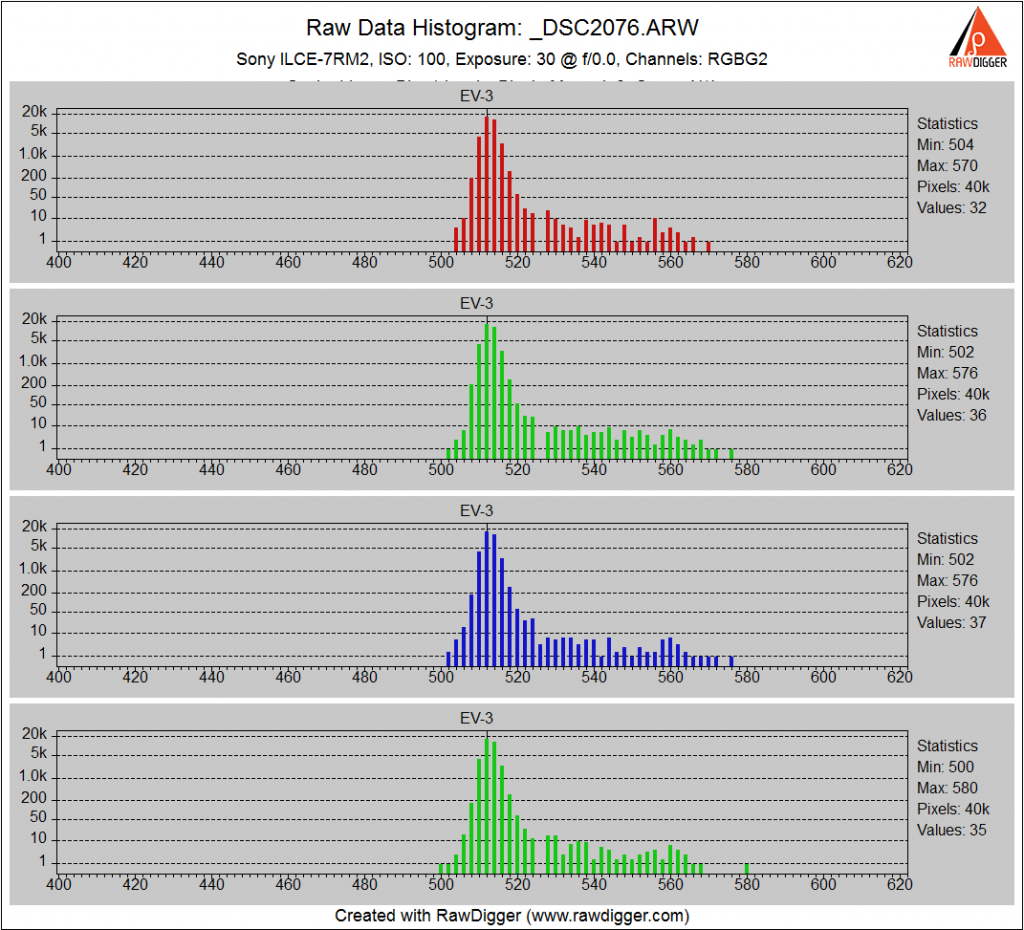

Here’s a histogram of the central region of the non-LENR image with a 30 second exposure:

The histogram looks pretty Gaussian, indicating that hot pixels may not be much of a problem.

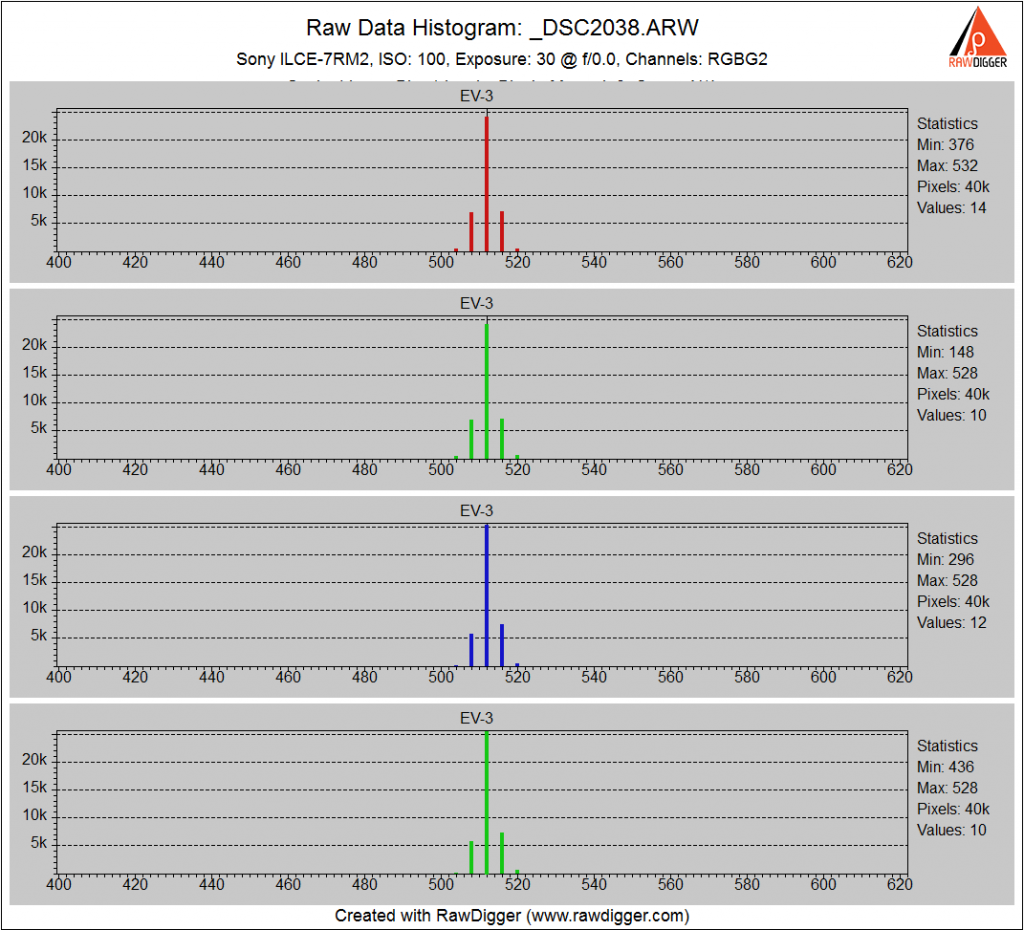

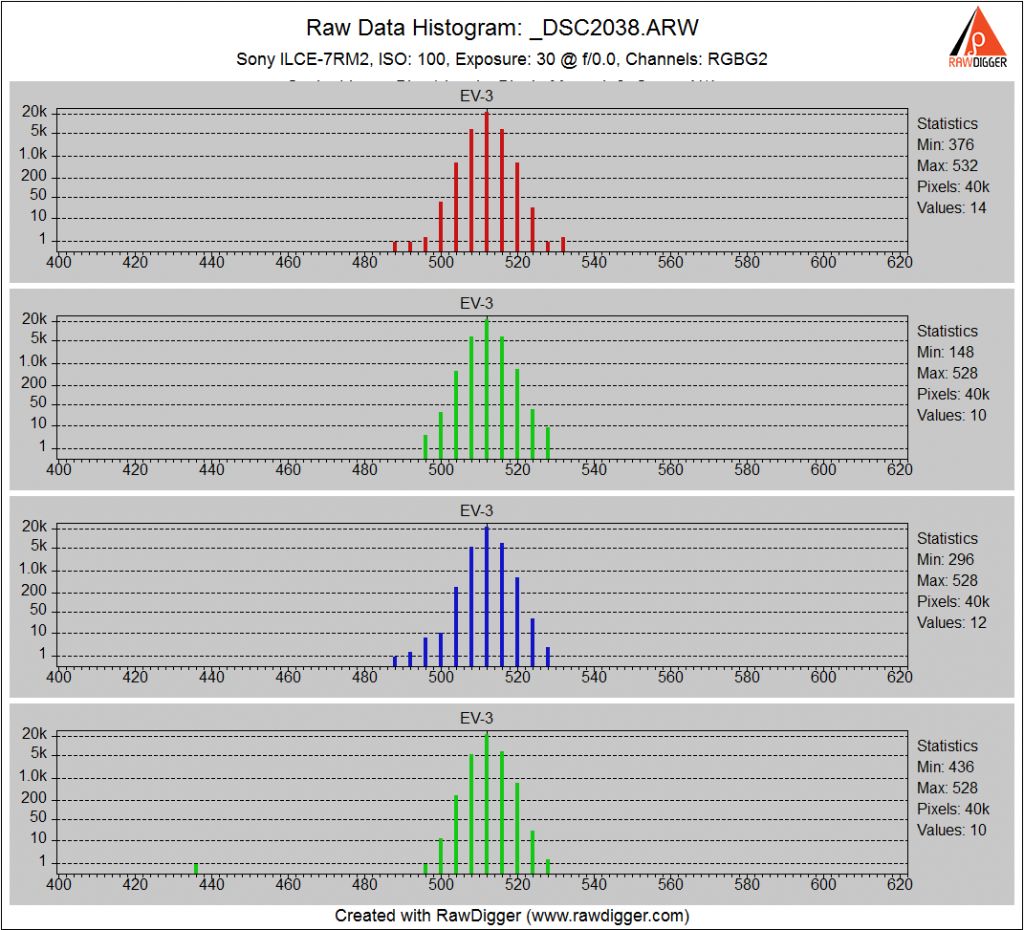

With LENR:

Again, we just see the broadening of the histogram due to the increased uncorrelated noise and don’t see an improvement in the hot pixels that we couldn’t find in the original image.

But let’s dig a little deeper and look at a pair of histograms with a log vertical axis.

First with no LENR:

Now we can see the hot pixels in the form of the extended rightward tail of the histogram.

When we invoke LENR:

The hot pixels are cleaned up, but the histogram has a poorer standard deviation. LENR is doing what it is supposed to do, but the gain in hot pixel suppression is outweighed by the loss in increased uncorrelated noise and the drop in precision from 13 to 12 bits.

Except for cameras with banding, dark frame subtraction isn’t useful for normal shadow noise, rather it’s for hot pixels.

The hot pixels that dark frame subtraction is intended to eliminate—do they significantly affect the standard deviation of the measurement patch? There might happen to be one or no hot pixels in the center in your copy of the camera, and so you only lose the half stop of dynamic range from subtracting two equally noisy, uncorrelated signals.

An astute observation. I’ll add some histograms to the post of the region in question.

Just clarify, for me:

Hot pixels are pixels that are over sensitive to the incoming photons? So over a progressively longer exposure more pixels become hot as the sensitivity distribution of the pixels is presumably gaussian. One would hope in a very tall, narrow distribution. Some, very few, will be hot at normal exposures?But in a previous post you explained that the dark frame was intended to remove dark current leakage , electrons “pretending” to be photons. So are the hot pixels not hot at all but just in the “wrong” place on the chip to be affected by the leakage of dark current?

Bottom line: is dark current causing hot pixels and all pixels are created equal?

Hot pixels are pixels that get brighter ~proportional(?) to the duration of the exposure, in addition to the standard sensitivity of the light. In short exposures you basically never see them.

I don’t think I’ve ever encountered a pixel that was more sensitive to light than other pixels; if that were true, sensor manufacturers would be trying really hard to make them *all* like that.

However, the reverse does exist, a dead pixel that doesn’t respond much (if at all) to light.

I don’t know the exact mechanism by which hot pixels happen, but it might be anomalously high dark leakage current on certain photodiodes.

So no, not all pixels are equal.

I was just getting around to writing an answer to Chris’ comment, but you beat me to it, and a good job you did. The commonly accepted name for variation in pixel response to light the photon response non-uniformity (PRNU). I usually meaasure it for cameras I’m testing. I haven’t yet measured it for the a7RII. The reason I’m not very motivated to measure it on the new camera is that it’s usually very low on modern cameras. Standard deviations of under 0.5% are common.

And yes, dark (leakage) current is the usual cause of hot (as opposed to stuck) pixels, AFAIK.

Jim

Thank you both, loose terminology ( not yours) has led me astray. Yes I did fall into the same trap saying “over sensitive” meaning as you say proportionally more responsive. Again though you would think that would be exploited, I suspect DR is the looser though stopping that.