In this post, we saw a pronounced self heating effect in the dark noise of successive 30-second exposures with the Sony a7RII. I repeated the test with the predecessor camera, the a7R, and found far less effect.

A reader postulated that the difference might be lower thermal conductivity between the sensor and the camera chassis because of the in-body image stabilization (IBIS) in the a7RII. That made sense to me, so I thought that I’d run a test with the A7II, which also has IBIS, to see if it showed radical increases in dark-field noise when operated to produce long sequences of 30 second exposures.

I set the camera’s shutter speed to 30 seconds, which is as long as it will go. I set the shutter mode to single shot. I set the ISO to 3200, which is higher than I’d use myself, but seems to be some kind of astrophotography standard. I hooked the camera to an intervalometer that was set to 1 second, so that would be the greatest time interval between exposures. I turned off lens corrections amd IBIS. I turned off long exposure noise reduction. I stopped the lens down to f/22 and affixed the lens cap. I made 140 exposures.

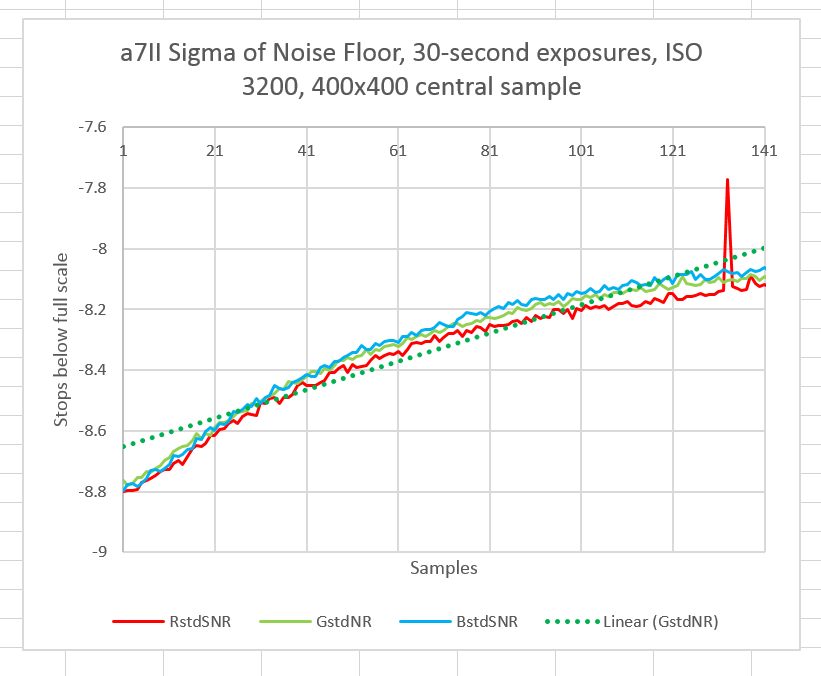

I measured the standard deviation of a central 400×400 pixel sample:

[Note: the original curve set posted was inadvertently done at ISO 800, Thanks to Horshack from DPR for pointing out the error.]

The effect is less pronounced than with the a7RII, 0.8 stops on this curve vs 1.4 or 1.5 on the a7RII curve. It looks like the difference is either between the characteristics of the sensor in the a7RII being different from those in the a7R and a7II, or the fact that the a7RII puts out more heat. Since the battery life is more-or-less the same, I’m betting on the former.

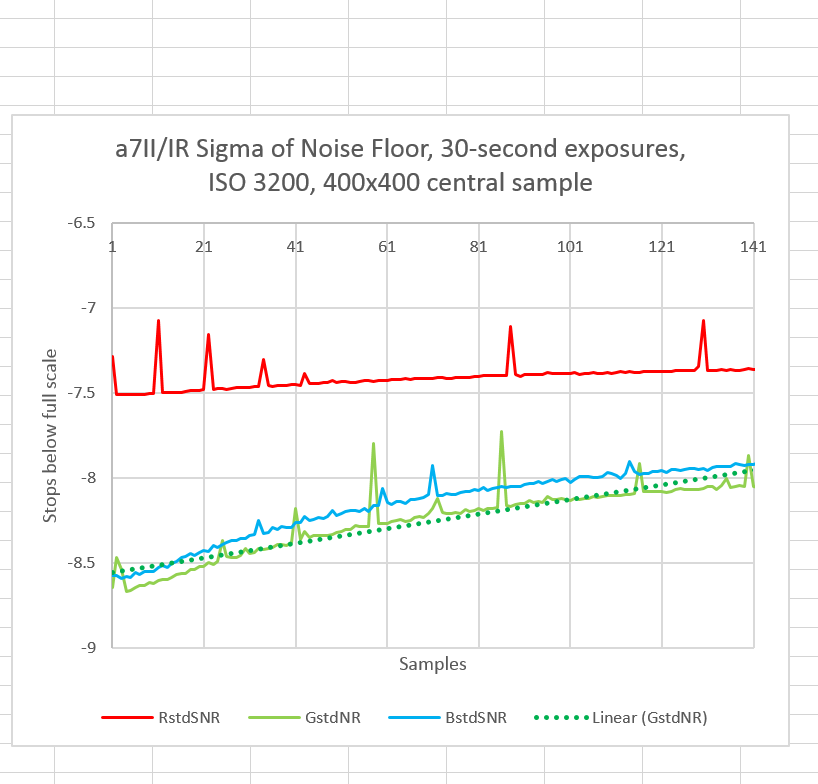

Here’s something that’s interesting. It doesn’t relate to the main point of these tests, but it may indicate a caveat for the test method. When I first ran the above sequence, I picked up an a7II that had been modified for infrared photography without realizing it. I got these curves:

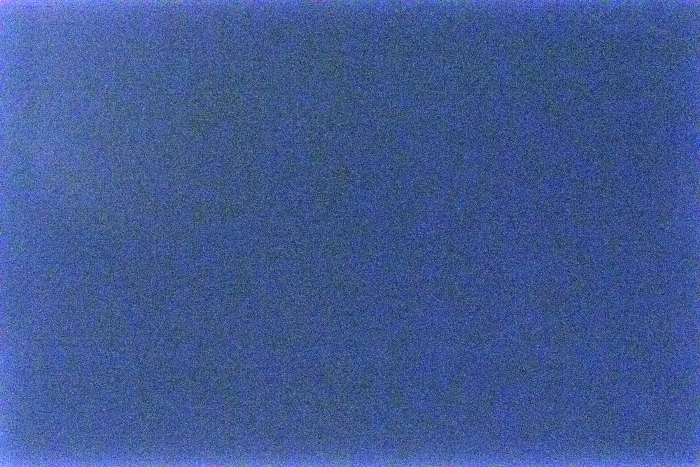

Notice the increased noise in the red channel. I went back and looked at the sample images. The channel discrepancy varies with the location of the 400×400 sample square in the frame. The camera is making an image of something, but I can’t tell what. Looking at the demosaiced image doesn’t help much.

At this point, this is a mystery to me.

[The material below was added later]

Horshack, from DPR, analyzed the raw files from the IR camera and discovered that the source in the variation of the sigma levels was do to a population of hot pixels. The red ones happened to dominate in the 400×400 sample region chosen. I still don’t know why this camera has so many hot pixels.

Kirlian photography, perhaps? If so, perhaps Steve Huff might be able to shed some (ahem) light on this for you…