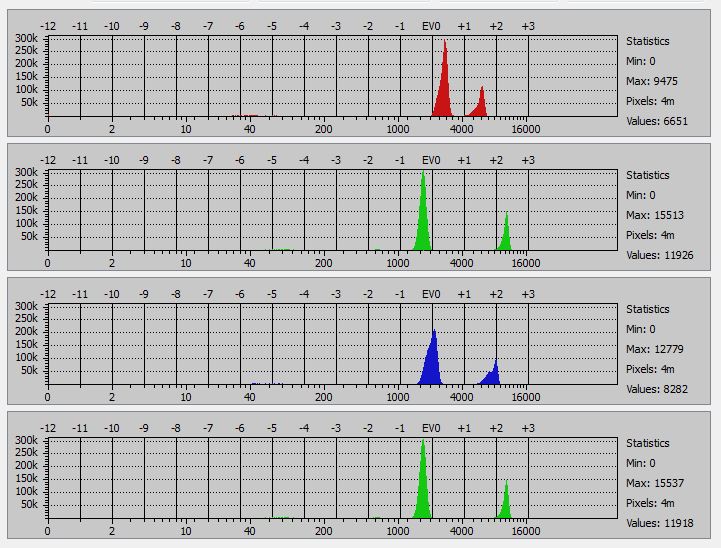

I mounted a 135mm lens on my D4. That’s the longest lens I can use an still get the whole target square in the image. I took a picture of a random magenta square to see how tight the raw histograms would be:

The Rawdigger histograms aren’t super tight, but that’s about all I can do without moving my display to a larger room (look only at the lower big bumps):

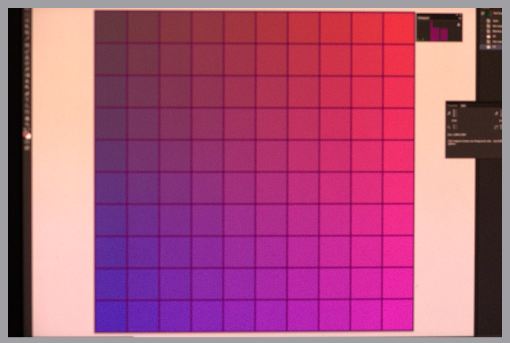

Then I made an image of the entire test target that I created by the means described in the previous post:

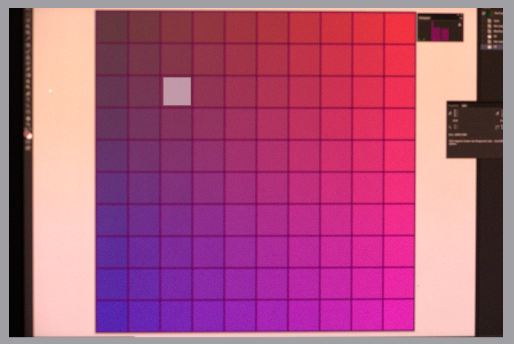

I brought that image into Rawdigger, and moved the cursor over the sub-squares to find the one closest to the neutral axis. When I found what looked to be the right one, I made a square sample:

Then I looked at the average data of the sample:

This looks pretty close.

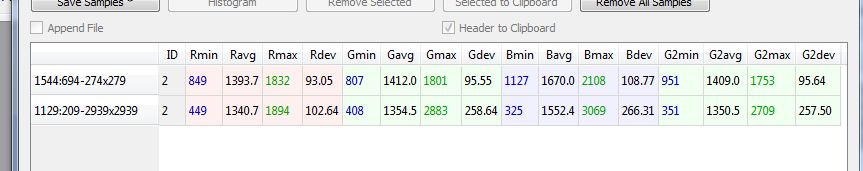

Just to make sure that I was on the right track, I created a layer in the test image, filled it with R=100. G= 64, B=100, which is the value of the selected sub-square. I brought that image into Rawdigger, left the selection exactly where it was, and measured the stats. Then I made another selection of most of the square, and measured the stats. Here are the results, with the small sample of top:

It looks like we have picked up a little blue, for reasons that escape me, but at least the small square and the big square aren’t too far off.

I then moved the camera in close enough that I could see nothing that wasn’t in the test square, made an exposure, brought it into Rawdigger, selected most everything, and read out the stats:

Still blue-heavy, but in reasonable agreement with the immediately-previous image. It looks like this might be a stable enough process to get real close in the next iteration.

Stay tuned.

Leave a Reply